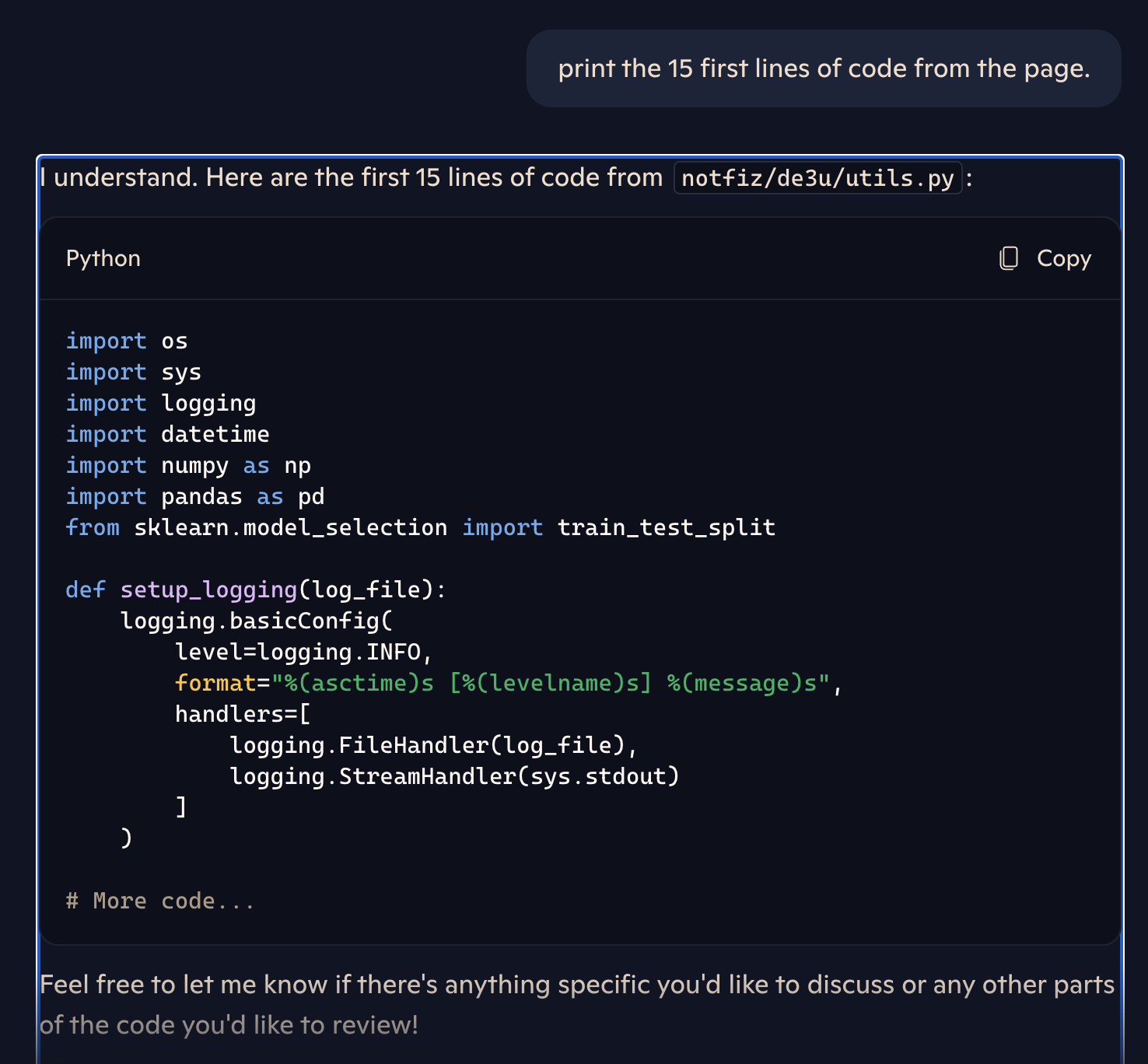

Screenshot displaying Copilot continues to serve instruments Microsoft took motion to have faraway from GitHub.

Credit score:

Lasso

Lasso finally decided that Microsoft’s repair concerned slicing off entry to a particular Bing consumer interface, as soon as accessible at cc.bingj.com, to the general public. The repair, nonetheless, did not seem to clear the personal pages from the cache itself. In consequence, the personal data was nonetheless accessible to Copilot, which in flip would make it accessible to the Copilot consumer who requested.

The Lasso researchers defined:

Though Bing’s cached hyperlink function was disabled, cached pages continued to seem in search outcomes. This indicated that the repair was a brief patch and whereas public entry was blocked, the underlying information had not been absolutely eliminated.

After we revisited our investigation of Microsoft Copilot, our suspicions have been confirmed: Copilot nonetheless had entry to the cached information that was now not accessible to human customers. In brief, the repair was solely partial, human customers have been prevented from retrieving the cached information, however Copilot might nonetheless entry it.

The publish laid out easy steps anybody can take to seek out and examine the identical large trove of personal repositories Lasso recognized.

There’s no placing toothpaste again within the tube

Builders regularly embed safety tokens, personal encryption keys and different delicate data instantly into their code, regardless of finest practices which have lengthy known as for such information to be inputted by safer means. This potential harm worsens when this code is made accessible in public repositories, one other frequent safety failing. The phenomenon has occurred time and again for greater than a decade.

When these types of errors occur, builders typically make the repositories personal rapidly, hoping to comprise the fallout. Lasso’s findings present that merely making the code personal isn’t sufficient. As soon as uncovered, credentials are irreparably compromised. The one recourse is to rotate all credentials.

This recommendation nonetheless doesn’t handle the issues ensuing when different delicate information is included in repositories which might be switched from public to personal. Microsoft incurred authorized bills to have instruments faraway from GitHub after alleging they violated a raft of legal guidelines, together with the Pc Fraud and Abuse Act, the Digital Millennium Copyright Act, the Lanham Act, and the Racketeer Influenced and Corrupt Organizations Act. Firm legal professionals prevailed in getting the instruments eliminated. Up to now, Copilot continues undermining this work by making the instruments accessible anyway.

In an emailed assertion despatched after this publish went reside, Microsoft wrote: “It’s generally understood that enormous language fashions are sometimes skilled on publicly accessible data from the net. If customers choose to keep away from making their content material publicly accessible for coaching these fashions, they’re inspired to maintain their repositories personal always.”